Google Gemini: Flex/Priority Inference Cuts AI Costs 35%

Alexander Bazilevich is a CRM expert and Top Salesforce Partner with over 17 years of sales experience in the IT industry. He specializes in transforming corporate goals into profits through cross-functional collaboration and innovative business solutions, with deep expertise in business systems and IT products.

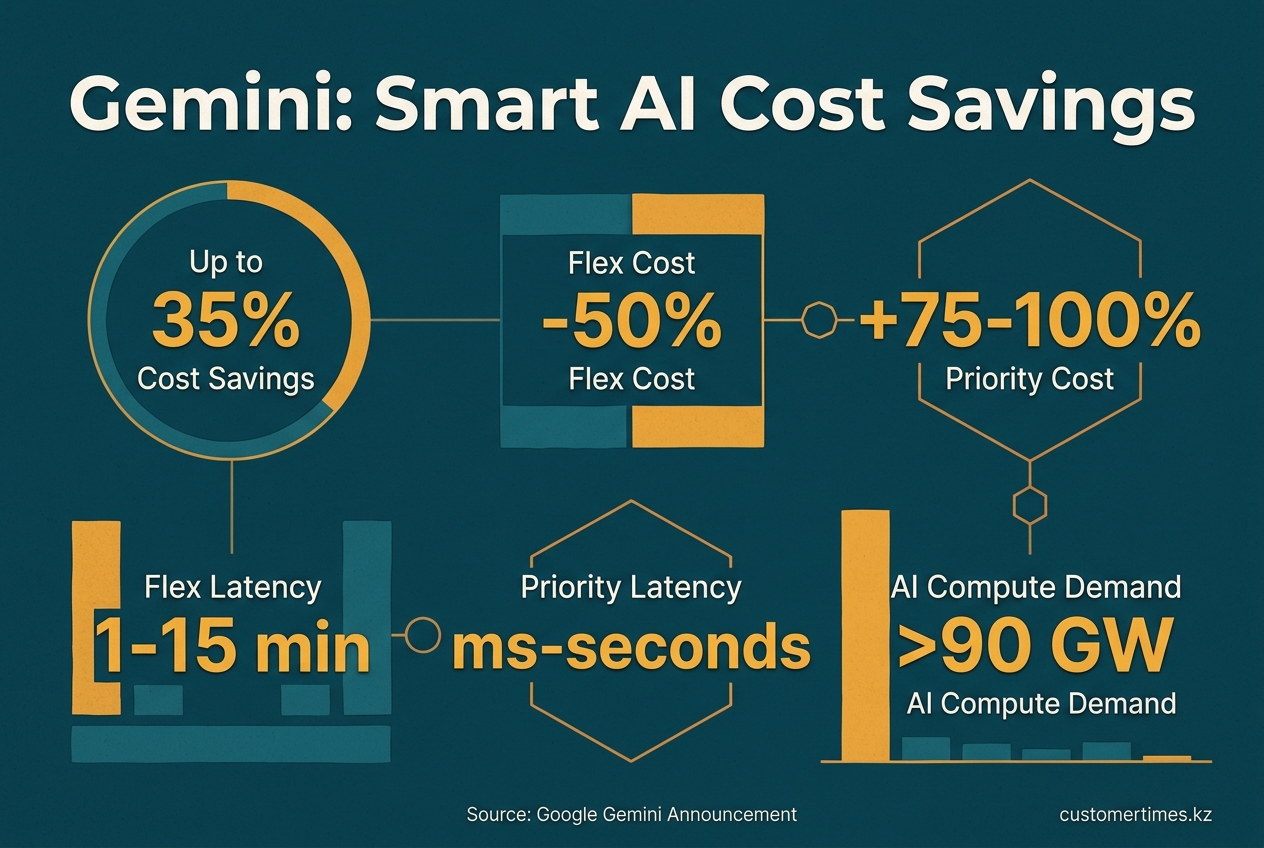

Google's Gemini API introduces Flex & Priority Inference tiers, cutting AI costs up to 35% by optimizing latency and efficiency.

The April 2026 launch of Google Gemini's Flex Inference and Priority Inference cuts AI costs by up to 35%, marking the end of "one-size-fits-all" AI pricing. Instead of a single rate, enterprises can now tag each API call with a service_tier parameter, choosing between a thrifty batch mode (Flex) or a premium real-time service (Priority). Early users report this simple switch dramatically reduces monthly AI bills while keeping user-facing features within strict latency SLOs.

What are Flex Inference and Priority Inference for the Google Gemini API?

Google's Flex and Priority Inference are service tiers for the Gemini API. Flex Inference offers a low-cost, high-latency option for non-urgent tasks, processing them in a queue. Priority Inference provides a premium, low-latency service for time-sensitive requests, ensuring immediate responses at a higher cost.

| Tier | Price vs. Standard | Typical Latency | Sheddable? | Overflow Rule |

|---|---|---|---|---|

| Flex | - 50% | 1-15 min queue | Yes, off-peak capacity | None (best effort) |

| Priority | +75-100% | ms-seconds | No, highest priority | Auto-falls back to Standard if quota exceeded |

From a developer's perspective, the implementation is seamless. Calls are made to the same synchronous GenerateContent or Interactions endpoint, which returns a header indicating the tier used. This allows FinOps dashboards to precisely track spending, attributing costs to specific tasks like a CRM update versus a live chatbot response.

Why Tiered Inference Arrived in 2026

Tiered inference is a direct response to unsustainable growth in AI compute demand, which is projected to surpass 90 GW and exceed training workloads before 2030. As hyperscalers cannot build data centers fast enough, the strategy has shifted from deploying more GPUs to optimizing hardware utilization. Older, less powerful hardware is now repurposed for high-latency Flex queues, while the latest high-performance pods are reserved for Priority traffic. This approach creates a more efficient, dual-purpose infrastructure from a single physical fleet, maximizing the value of existing silicon.

"We can't keep scaling compute, so the industry must scale efficiency instead."

- Kaoutar El Maghraoui, IBM Research, 2026 trends briefing

Enterprise Wiring Patterns Already Live

-

Dynamic load balancers

An international consumer goods distributor routes each incoming prompt through an internal router. If the request originates from a point-of-sale system, it receives Priority; if it is a nightly batch job to score millions of loyalty accounts, it is assigned to Flex. The switch is managed with a simple header modification, eliminating the need for a separate batch-processing codebase. -

Agentic workflows

Complex agentic workflows that chain multiple model calls for tasks like research and summarization are ideal for Flex. By default, the agent's internal "thinking" processes run on the 50% discount tier, with only the final, user-facing answer being upgraded to Priority. -

Edge spill-over

Retailers using on-device Gemini models can implement an intelligent spill-over pattern. Initial requests target a local micro-data-center using Priority. During traffic spikes like Black Friday, if the local quota is exceeded, requests seamlessly fall back to the Standard cloud tier, preventing user-facing errors.

Pricing That Changes With the Clock

Google does not use separate SKUs for these tiers; discounts and premiums are applied automatically at billing. The Flex tier's 50% discount is achieved by utilizing idle compute capacity, similar to spot VMs, while its synchronous nature eliminates the need for developers to refactor code for asynchronous processing. The 75-100% premium for Priority guarantees a quality-of-service (QoS) threshold and includes a "graceful downgrade" policy, moving burst traffic to the Standard tier instead of throttling it.

Competitive Gap, for Now

Currently, Google holds a distinct advantage. Competitors like OpenAI and Anthropic primarily offer flat per-token pricing, while AWS Bedrock's "provisioned throughput" is a capacity reservation, not a latency-based service tier. This makes Google's the first true service-tier API, positioning Gemini as the most cost-effective option for latency-tolerant workloads without requiring a shift to asynchronous batch processing. Analysts predict rivals will introduce similar offerings soon.

Enterprises facing monthly AI compute bills in the "tens of millions" now treat Flex as an internal carbon credit: every 1 M Flex tokens saves ~0.4 kWh, which aggregates to entire megawatts across million-user portfolios.

Practical Steps to Adopt

- Analyze Traffic: Before migrating, tag existing API calls with metadata (e.g., user-facing vs. internal) for a billing cycle to identify the natural split between Priority and Flex candidates.

- Create a Centralized Wrapper: Develop a thin internal library to add the

service_tier="flex"header. This centralizes the logic and avoids modifying vendor SDK code directly. - Monitor Downgrades: Implement logging to track when Priority requests are downgraded to Standard. Persistent downgrades indicate the provisioned quota needs adjustment, not a code change.

- Account for Jitter: While Flex queues average under 3 minutes, 95th-percentile latency can reach 15 minutes. Ensure tasks assigned to Flex can tolerate this potential jitter.

- Combine with Caching: For repetitive tasks, pair Flex inference with context caching. Cache hits are served at the discounted rate, while new requests still benefit from queue savings.

Tiered inference transforms the Gemini API into a dual-purpose tool within a single endpoint: a cost-effective engine for background tasks and a premium service for real-time user interactions. Data from early adopters shows that shifting just 40% of internal traffic to Flex can free up enough budget to double the volume of Priority calls. This unique equation allows enterprises to enhance service quality while simultaneously reducing overall AI expenditure - a compelling value proposition for any organization.