2026: FDA, EMA Set Rules for AI in Drug Development

Pharma AI R&D faces new regulatory hurdles in 2026 as FDA & EMA define credible AI evidence & lifecycle audits. Prepare now!

In 2026, the FDA and EMA set new rules for AI in drug development, transforming from observers to gatekeepers for pharmaceutical R&D. These regulations require drug companies to follow strict protocols for AI model validation, risk management, and lifecycle documentation. Firms must now provide clear evidence of how AI is used, maintain rigorous records, assess risks, and prove their models are effective and safe.

Regulatory agencies now demand comprehensive documentation, explicit human oversight, and robust data governance, eliminating tolerance for "black-box" methodologies. While these rules may slow initial project timelines, they ensure that only safe, well-tested AI models get used for new medicines.

What are the new regulatory requirements for AI in pharma drug development in 2026?

In 2026, the FDA and EMA mandate that AI used in drug development must follow a defined roadmap. This framework requires a clear context of use, a thorough risk assessment, a robust validation plan, strong data governance, complete model documentation, formal change-control SOPs, and continuous lifecycle monitoring. Failing to provide critical components, especially change-control plans, now triggers major regulatory review delays.

The 2025 draft rulebook - seven steps, one context

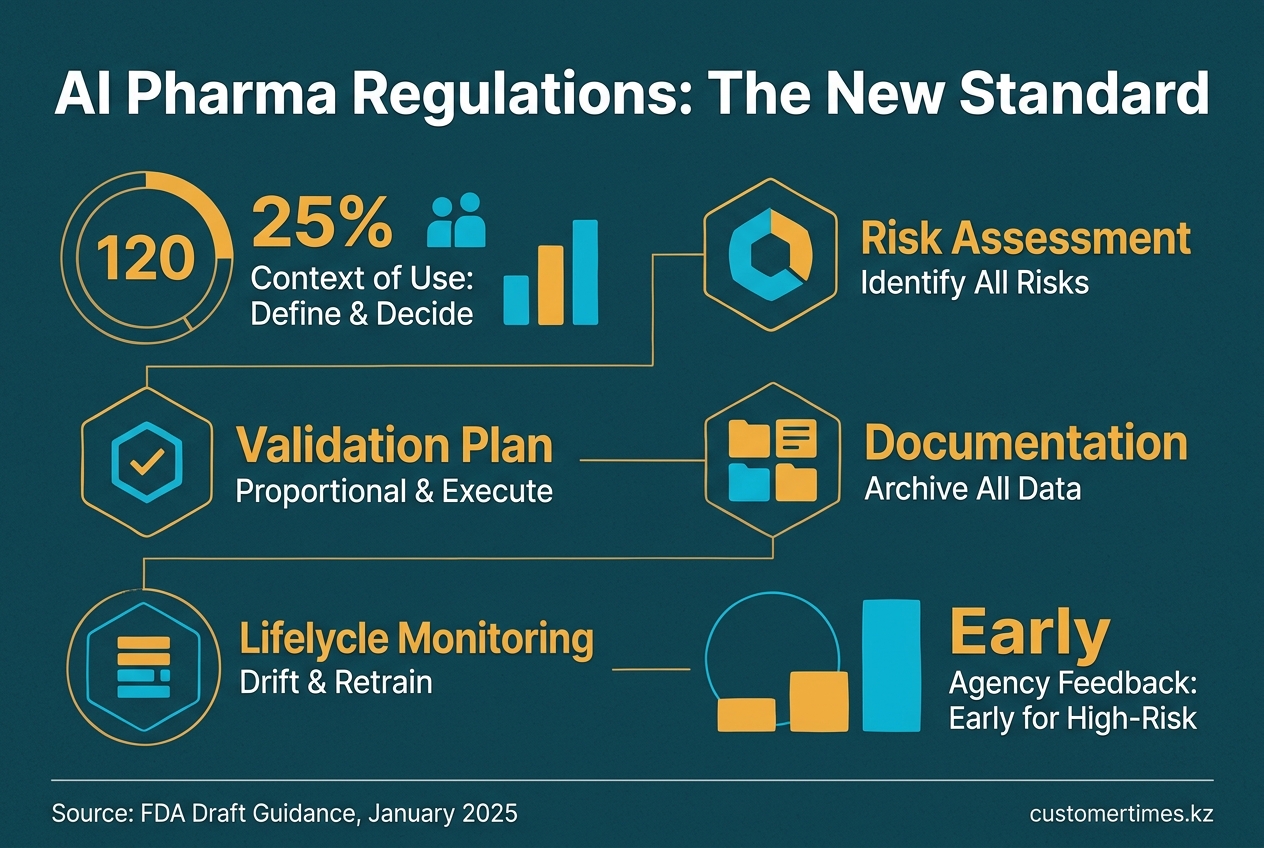

New regulations require sponsors to define the AI model's specific context of use and associated risks. They must then create and execute a proportional validation plan, document all methodologies, manage the model's lifecycle with change controls, and secure early agency feedback for high-risk applications.

Released in January 2025, the FDA's draft guidance frames AI validation as a seven-step process anchored to a specific context of use (COU). Sponsors are required to:

- Define the COU and the decision the model influences

- Identify all risks to patients, trial integrity, and product quality

- Create a validation plan proportional to the identified risks

- Obtain early FDA feedback on the plan

- Execute the validation plan (statistical tests, stress tests, cross-validation)

- Archive all methodology, raw data, results, and deviations

- Monitor the model post-deployment for drift and retrain under strict change control

For high-risk applications like dose selection, pivotal trial endpoints, or manufacturing release, the FDA now expects pre-submission meetings and algorithmic disclosure as detailed as traditional CMC documentation.

"Sponsors should meet with the agency before using a high-risk AI model in a critical decision."

- FDA Draft Guidance, January 2025

Since 2016, agency reviewers have already applied this framework to over 500 submissions containing AI, primarily in oncology and neurology.

The 2026 joint principles - ten commandments for the full life-cycle

In January 2026, the FDA and EMA aligned their expectations by issuing the "Guiding Principles of Good AI Practice in Drug Development." While non-binding, these ten principles outline future regulatory requirements:

- Human-centric design with clear human-override capabilities

- Risk-based evidence standards, not a one-size-fits-all approach

- Adherence to established standards (ISO 13485, GAMP5, ICH Q9)

- A clear COU and an appropriate mix of stakeholder expertise

- Robust data governance, including provenance and version control

- Thorough model development documentation and audit trails

- Performance metrics tied directly to clinical relevance

- Comprehensive life-cycle management with drift detection protocols

- Transparent communication between the sponsor and regulators

Co-signed by the EMA, these principles link AI validation to EU Annex 11 data integrity rules and GxP inspections, meaning "black-box" excuses will not survive on-site audits.

What a submission bundle now looks like

While regulators avoid rigid templates, an ideal AI evidence package should contain the following components. Items marked critical are frequently requested by agencies if omitted from the initial submission.

| Component | Typical artefacts | Review risk if absent |

|---|---|---|

| COU statement | Intended decision, success criteria | Major |

| Data governance dossier | Source tables, cleaning scripts, QC metrics | Critical |

| Model specification | Architecture diagram, hyper-parameters, training logs | Critical |

| Validation report | Internal/external splits, ROC/AUC, confidence intervals | Major |

| Explainability add-on | SHAP/LIME plots, rule extraction, expert mapping | Major |

| Change-control SOP | Version hashing, retraining triggers, rollback plan | Critical |

| Post-marketing plan | Performance dashboard, update cadence, signal limits | Major |

Analysis of early submissions indicates that packages missing a change-control SOP are likely to trigger a 90-day review clock stop, even if the model demonstrates high predictive accuracy.

Velocity gap - why AI is outrunning the lab

Even as validation requirements become stricter, AI models continue to generate thousands of potential drug candidates weekly. This creates a "Velocity Gap": AI proposes molecules, peptides, and formulations faster than GxP-compliant labs can synthesise or test them, leading to significant backlogs. To bridge this gap, sponsors are adopting a hybrid, model-informed approach. By filtering AI predictions through Quantitative Systems Pharmacology (QSP) or PBPK simulations before initiating lab work, they report 23% fewer late-stage failures while remaining compliant with new regulations.

From pilots to platforms - what sponsors change in 2026

- Isolated proof-of-concept teams are being integrated into cross-functional AI steering committees that include regulatory, QA, biostatistics, and IT security experts.

- Generative AI pilots now require a documented COU before budget approval. MIT research from 2025 found a 95% failure rate for pilots that skipped this step.

- CRO contracts increasingly include data-FAIRness clauses (Findable, Accessible, Interoperable, Reusable) to prevent regulatory objections to opaque, outsourced models.

- Vendors are embedding audit-trail APIs into their platforms from the start, avoiding costly retrofitting after an agency request.

"Regulatory clarity is one of the top three barriers to wider AI adoption in pharma."

- Industry survey cited in 2026 trends analysis

Cost and timeline impact - early metrics

Contrary to fears of added delays, early adopters report that the upfront investment in documentation shortens overall review cycles. A 2025 study of 42 FDA AI submissions revealed that applicants who held pre-submission meetings averaged 92 fewer review days than those who waited, a benefit that outweighed the internal preparation time.

Global ripple - EMA reflection and ICH momentum

The EMA's September 2024 reflection paper had already prioritized transparency, validation, and human oversight. The 2026 joint principles now accelerate global alignment and are expected to inform the ICH's forthcoming Q-AI guideline, which aims to harmonize AI validation terminology for regulators like Japan's PMDA and Health Canada. Consequently, sponsors planning multinational trials are designing protocols to meet both FDA and EMA standards from the outset.

What happens next

Regulatory agencies have indicated that formal guidance will harden the principles into law within 18-24 months. This guidance is expected to introduce a tiered compliance system: a lower burden for AI in exploratory research and full GMP-style validation for AI that sets product specifications or manages lot release. Until then, regulators advise sponsors to document all AI activities as if the rules were already in effect, as any model used in an application after 2026 will be audited against this framework.

Firms that treat AI validation as an afterthought risk the same outcome as those who were slow to adopt electronic submissions: refuse-to-file letters or, worse, market-entry delays once competitors with audit-ready AI reach the podium first.